Augmented Edge Enhancement on Google Glass for Vision-Impaired Users

Augmented Edge Enhancement on Google Glass for Vision-Impaired Users

Google Glass provides a unique platform that can be easily extended to become a vision- enhancement tool for people with impaired vision. The authors have implemented augmented vision on Glass that places enhanced edge information over a wearer’s real-world view. This article is based on the paper, “Augmented Edge Enhancement for Vision Impairment Using Google Glass,” to be presented at the 2014 Display Week technical symposium on Wednesday, June 4.

by Alex D. Hwang and Eli Peli

MOST PEOPLE with impaired vision experience reduced visual acuity (VA) and contrast sensitivity (CS). These reduced visual functions have a large impact on the quality of life. For example, people with impaired vision are often unable to recognize faces at an appropriate distance for initial contact, which can be a great social hindrance, especially in senior-citizen communities.

A number of augmented-vision head-mounted-display (HMD) systems have been proposed and prototyped to help with various vision impairments.1 Peli et al.2 developed an augmented image-enhancement device that superimposed an edge image over a wearer’s see-through view. Visibility enhancement came from the high-contrast edge aligned precisely with the see-through view. However, when the camera axis and the HMD’s virtual display are not coaxial, parallax makes alignment of edges with objects at various distances difficult. An on-axis HMD-camera configuration was subsequently prototyped and achieved

good alignment across a range of distances,3 but the brightness of the edge images was severely reduced by the optical combining system. Early HMD vision aid prototypes were usually bulky, requiring a separate image-processor unit that had to be carried along with a battery/power unit. They were also heavy, uncomfortable, and unattractive. Therefore, these and some commercial magnifying and light-enhancing (for night blindness) HMD systems designed as visual aids were not successful in the marketplace.

Google Glass, a wearable computer with an optical see-through HMD that was first announced in 2012, provides a unique hardware and software development platform

that can be easily extended to vision enhancement devices for visually impaired people. The Google Glass package is attractive and substantially more comfortable than the aforementioned designs, and, therefore, more suitable to social interactions. Google Glass has a wide-angle camera, a small see-through display, and enough mobile CPU/GPU power to handle the necessary image processing. Although a full Glass API has not yet been provided, its Android-based operating system supports OpenGL ES and camera access.

Goals for Google Glass

Several challenges were apparent to the authors when they began working with Google Glass on creating a new augmented-vision edge-enhancement visual aid. To provide an augmented view that overlaid enhanced edge information accurately over the wearer’s real-world view, spatial alignment between the augmented and real view had to be resolved for the Google Glass hardware configuration. The inherent parallax was not as large as in earlier devices because the Glass camera is close to the display optics (a horizontal displacement of 18 mm). The edge-enhancement method and level of enhancement needed to be user selectable because these depend on factors such as type and degree of vision loss (which can be progressive or variable), the task at hand, scene content, and image clarity. The user interface to control the device parameters has to be simple, intuitive, and quick.

Google Glass Hardware

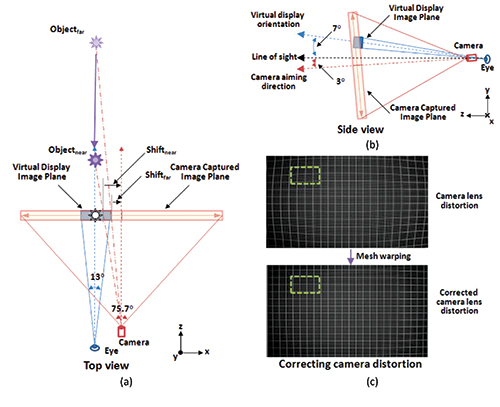

Google Glass has a camera with a relatively large field of view that can capture 75.7° × 58.3° of the world with a resolution of 2528 × 1856 pixels [see Figs. 1(a) and 1(b)]. During video recording, the upper and lower portions of the captured image are cropped to fit the more conventional 16:9 screen ratio and encoded at 1280 × 720 pixels, 30 fps (720p). The display, only available for the right eye, spans a visual angle of 13° × 7.3° at a 640 × 360 pixel resolution [see Figs. 1(a) and 1(b)]. The camera and display optics are encased in a single rigid compartment, so that adjusting the display position also changes the camera’s aiming angle. The camera is set to aim 10° downward relative to the display direction. The display is angled at 7° above the wearer’s line of sight; thus, when a Google Glass wearer looks straight ahead, the camera is aiming 3° downward [see Fig. 1(b)]. The alignment process is simpler when the virtual display plane is perpendicular to the line of sight. In the case of Google Glass, in order to use it as an augmented-reality device, the user has to tilt his/her head down and look 7° upward. The wearer’s active eye and head movements naturally align the virtual display with the center of the wearer’s visual field, bringing the display plane perpendicular to the wearer’s line of sight.

Fixing Camera Distortion

The Google Glass wide-field camera inevitably introduces distortions, especially at high eccentricities. Therefore, image alignment starts with correcting the captured image distortion so that the acquired image represents a correct orthographic projection. We measured the camera distortion and generated a corrective mesh. Then, the corrective mesh was used to warp the captured image to match it to the ideal image plane projection [see Fig. 1(c)].

Correcting Image Zoom and View Point

The camera field of view of 75.7° is displayed on the 13° display. Therefore, the captured image has to be cropped and scaled up in size when it is

displayed. Then, the image is projected onto a display plane that is rotated 10° upward (rotation around the x-axis) to compensate for the relative angular

difference between the camera aiming direction and the virtual display orientation. With the image projection correction, an additional 2-D translation must be

applied to bring the center of the captured image in alignment with the center of the display [see Fig. 1(b) and 1(c)]. Note that the horizontal displacement between the camera and the virtual display causes parallax and image misalignment between the projected and captured images that depends on the distances to the objects in view. Since near objects (less than 10 ft. away) are easier to recognize for most visually impaired people, a default operational correction for parallax at 10 ft. is applied. The user can select other parallax settings by swiping along the touchpad on the Google Glass.

Fig. 1: (a) The above schematic shows the Google Glass hardware configuration (not to scale). The top view illustrates the required 2-D translation dependency on the distance to the aimed object of the projected image. (b) The side view identifies the angular compensation needed between the virtual-display orientation and the camera aiming direction. In (c), the Google Glass camera lens distortion is corrected. Note that only a small portion of the captured image (marked here as a green dashed rectangle) needs to be rendered.

Implementation

Google Glass (currently) runs on Android 4.4 and fully supports OpenGL ES 2.0. Our prototype application utilizes the 3-D graphic hardware pipelines of the Google Glass by using vertex and fragment shaders. Camera distortion is corrected by using an image-warping mesh and image projection that controls the rotation and translation of the image and is modulated by the vertex shaders. Since edge enhancement is implemented at the fragment shader level, only the visible portion of the captured image (about 328 × 185 pixels from the original preview image’s 1920 × 1080 pixels) is processed for edge enhancement. This effectively reduces the overall image processing load by a factor of 8.4 and allows the system to achieve high performance, reaching an acceptable real-time frame rate of 30 fps.

Edge-Enhancement Control

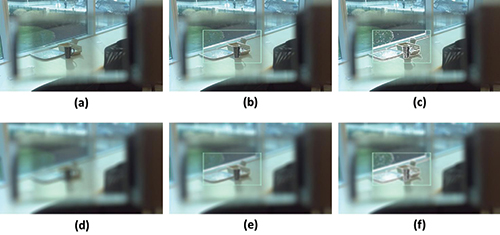

The usual implementation of edge-detection algorithms enhances all edges in the scene, in proportion to the local contrast. As a result, clearly visible edges of the scene are often over-emphasized, and may interfere with overall visibility. Our current implementation of the edge-detection algorithm has double thresholding, so that the user can reduce the enhancement of strong edges by applying a two-finger pinch gesture on the touch pad (see Fig. 2).

While enhancement using light and dark edges can be implemented with conventional displays,4 with an optical see-through system, edge enhancement can only be implemented with bright/white light, contributing to visibility enhancement mostly over darker portions of the scene because a dark/black edge becomes transparent in a see-through optical system.5 As a result, current edge enhancement shows minimal effect over the brighter area. Note the lack of enhancement on the handle of the mug in Figs. 2(e)–2(f).

It is hard to measure exactly how bright the AR edges are due to the HMD optics. However, the Google Glass display produces more than bright enough light indoors

and those edges remain apparent outdoors, thanks to the photochromic coating applied to the outer surface of the see-through display (transmission is reduced with exposure to ultraviolet light). Our pilot experiment with subjects using the described application on the Google Glass showed that people with contrast sensitivity worse than 1.50 from a Pelli-Robson contrast sensitivity test6 may receive a benefit from the AR edge enhancement using the current system without any modification.

Fig. 2: (a) Photo of a scene through the Google Glass simulates normal vision (NV). The other photos show: (b) a view with moderate edge strength on the display with NV; (c) a view with full edge enhancement with NV; (d)-(f) simulated views of the scene with the level of enhancement in (a)-(c) by a user with impaired vision (IV) (blurred). Note improved visibility of details with enhanced edges.

Effectiveness of the Small Visual Field Edge Enhancement

The small span of the display limits the angular extent of the augmented edge enhancement, but this does not limit the effectiveness of the device. The user with impaired vision frequently has normal peripheral vision, which is available to guide the direction of the virtual display and direct the edge enhancement to objects of interest by scanning through the scene with head movements. This mode of operation is similar to the function of normal vision, where the peripheral vision, which naturally has low VA or CS, guides foveal gaze7 and ego/exocentric motion perception.8 Once the target of interest is selected, detailed inspection is mainly carried by the foveal area, which has high VA and CS. Similarly, the visually impaired users use the highest sensitivity area on their retina to examine the enhanced image.

Potential Impact

We demonstrated that augmented vision enhancement can be efficiently implemented on Google Glass, providing a visual aid for people with impaired vision. This is an approach that we evaluated with earlier devices, and Google Glass shows improved performance. The compact and aesthetically pleasing hardware design of Google Glass is also more readily accepted by the general population. We believe this demonstration is encouraging with respect to the possibility of developing an HMD-based vision enhancement device for people with impaired vision at reasonable cost and high functionality.

We are currently implementing and testing additional applications using Glass, such as a scene magnifying app that provides functionality similar to that of

the spectacle mounted (bioptic) telescopes that are commonly used by people with impaired vision, but with controllable magnification levels and a cartoonized scene minifying app for people with restricted peripheral (tunnel) vision. Such a system has been previously shown to be beneficial for patients.5 We are also developing Google Glass apps for other specific tasks that people with visual impairments have difficulties with, such as distant face recognition and collision judgment and avoidance.

References

1E. Peli, “Wide-band image enhancement,” U.S. Patent 6,611,618, Washington, DC (2003).

2E. Peli, G. Luo, A. Bowers, N. Rensing, “Development and evaluation of vision multiplexing devices for vision impairments,” International Journal on Artificial Intelligence Tools 18(03), 365–378 (2009).

3G. Luo, E. Peli, “Use of an augmented-vision device for visual search by patients with tunnel vision,” Investigative Ophthalmology & Visual Science 47(9), 4152–4159 (2006).

4H. L. Apfelbaum, D. H. Apfelbaum, R. L. Woods, E. Peli, “The effect of edge filtering on vision multiplexing,” J. Soc. Info. Display 1398–401 (2005).

5G. Luo, N. Rensing, F. Weststrate, E. Peli, “Registration of an on-axis see-through head-mounted display and camera system,” Optical Engineering 44(2), 024002 (2005).

6D. Pelli, J. Robson, “The design of a new letter chart for measuring contrast sensitivity,” Clinical Vision Sciences 2(3), 187–199 (1988).

7I. T. C. Hooge, C. I. Erkelens, “Peripheral vision and oculomotor control during visual search,” Vision Research 39(8), 1567–1575 (1999).

8W. H. Warren, K. J. Kurtz, “The role of central and peripheral vision in perceiving the direction of

self-motion,” Perception & Psychophysics 51(5), 443–454 (1982). •

Alex D. Hwang and Eli Peli are with Schepens Eye Research Institute at the Massachusetts Eye and Ear Institute, Harvard Medical School. Prof. Eli Peli is a Fellow of the SID and can be reached at eli.peli@schepens.harvard.edu.